TLC breadth-first search vs random simulation

Author: Igor Konnov

Date: April 30, 2026

Recently, I wrote a blog post on random walks that compared the state coverage of random walks for increasingly larger sets of experiments: 100 thousands, 1 million, 10 million, and even 100 million episodes. There, I used custom-built simulators in Rust to randomly walk through the state spaces of several TLA+ benchmarks: two-phase commit, readers-writers, and FPaxos.

A. Jesse Jiryu Davis noticed my blog post and wrote a message on the TLA+ Google group. Since the random walks in the blog post are not exactly the same as the random simulation in TLC, we both wondered how the TLC simulation mode compares to the model checker in terms of coverage and running times. Markus Kuppe shared the options to collect distinct state coverage in the TLC simulation mode, see the discussion.

The important distinction between the random walks and the TLC simulator is that a random walk chooses one successor state at each step, whereas TLC enumerates all successor states and then chooses one of them uniformly at random. Markus explains the rationale behind this design choice in TLC:

…enumerating all successors is useful for more than just choosing the next step: TLC can also check invariants on all generated successor states, not only on the one that ends up being sampled. That is a meaningful benefit when the goal is to catch bugs, not just drive a walk.

This new blog post explores this direction. To get more details about the bennchmarks, read the original blog post on random walks.

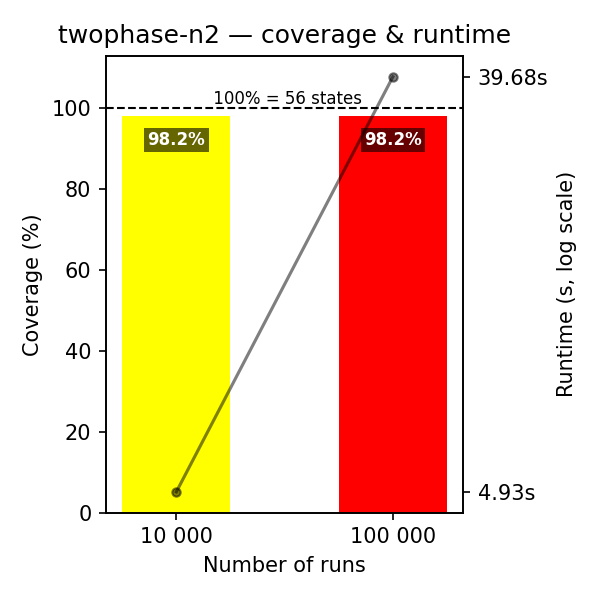

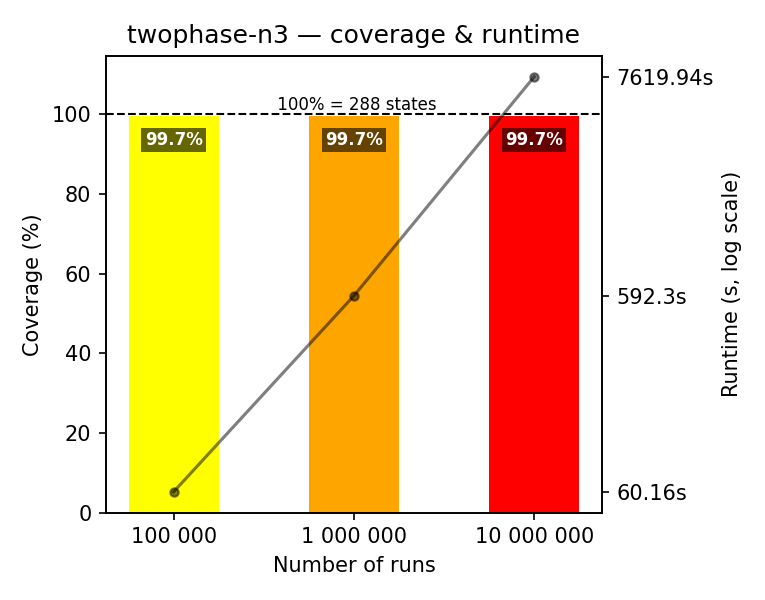

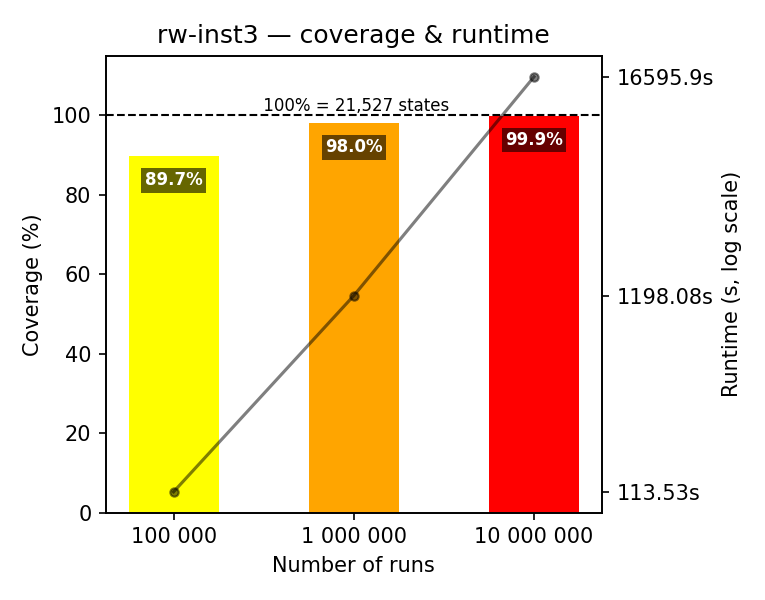

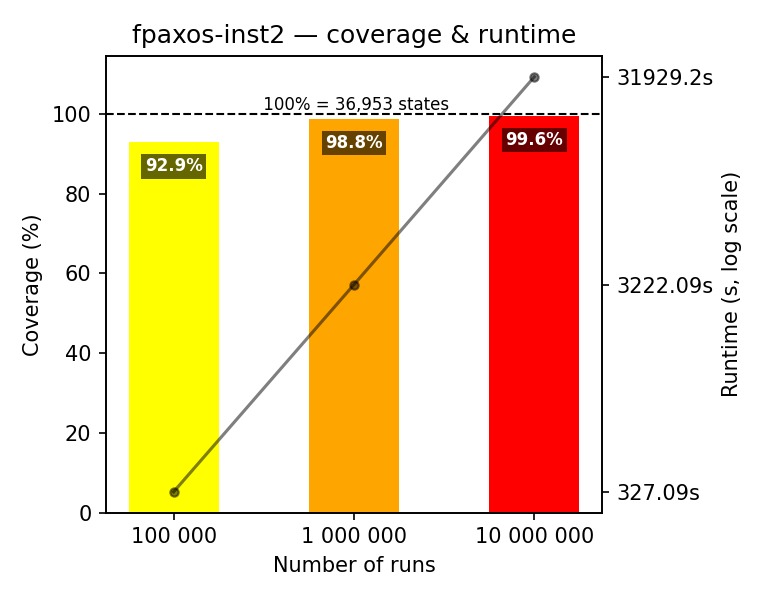

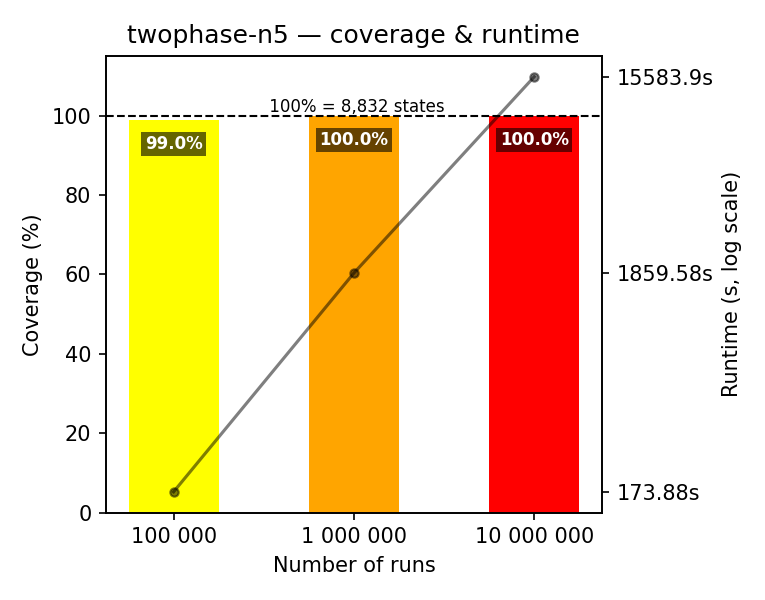

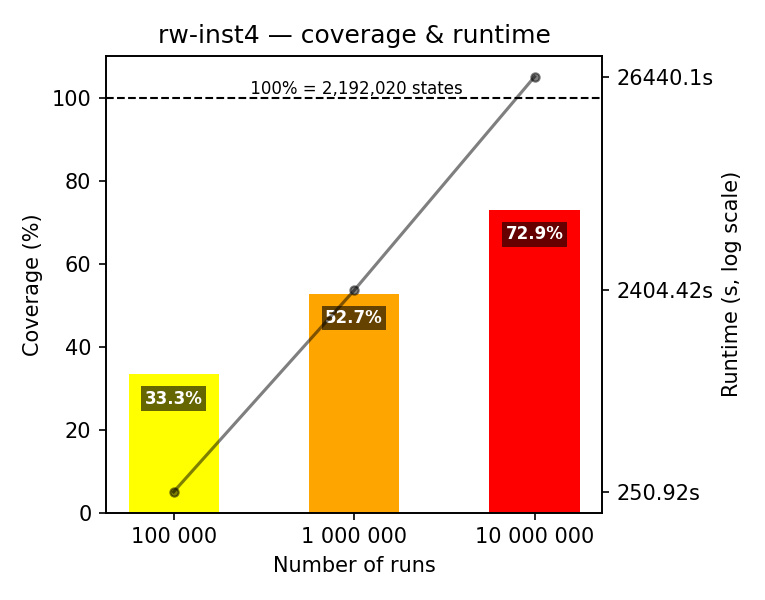

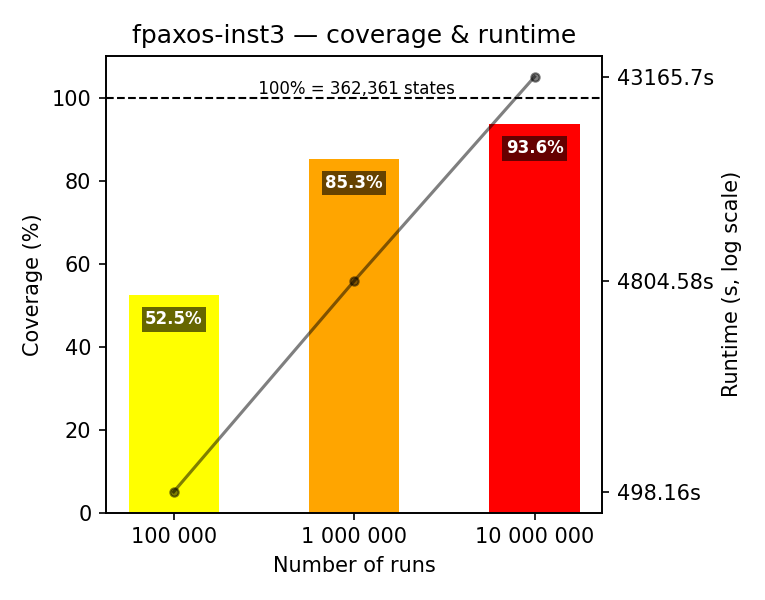

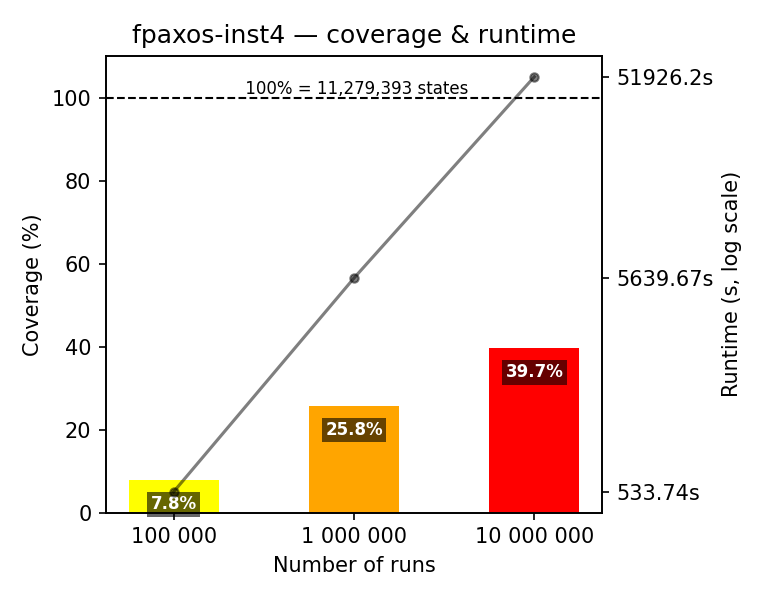

Look at two groups of figures below. They summarize the results of running random walks on specifications of three prominent distributed protocols: two-phase commit, readers-writers, and FPaxos (see Benchmarks). The figures show the coverage achieved by the TLC simulation mode, with 100% being the numbers of distinct states (reported by TLC). All running times are given for an AMD Ryzen 9 5950X processor (16 physical, 32 logical cores), 128 GB memory.

Importantly, the TLC simulations are run on a single worker, they are not running in parallel. We do that, in order to compute the state coverage precisely. When you look at running times, keep in mind that TLC can run multiple simulation workers in parallel.

In contrast to the previous blog posts, I do not provide the artifacts for download. AI slop forks are real. It still takes me several days to design and conduct the experiments on a beefy machine, as well as to find the right format to interpret and plot the data. It only takes 10-15 minutes to repackage the benchmarks and results with an AI tool, having the experimental data. Hence, I am sharing my lab book with the customers and researchers, upon request.

1. Coverage for minimal instances

In this set of experiments, we run TLC simulations for the minimal instances of the benchmarks. We start with the meaningful default of 100,000 simulation runs, with at most 100 steps per run. (Mind that the successor set is computed at each step.) As you can see from Figure 1, the coverage is close to 100%, but it’s not complete. Interestingly, 10 million runs give us 99.9% coverage.

2. Running times for tiny instances

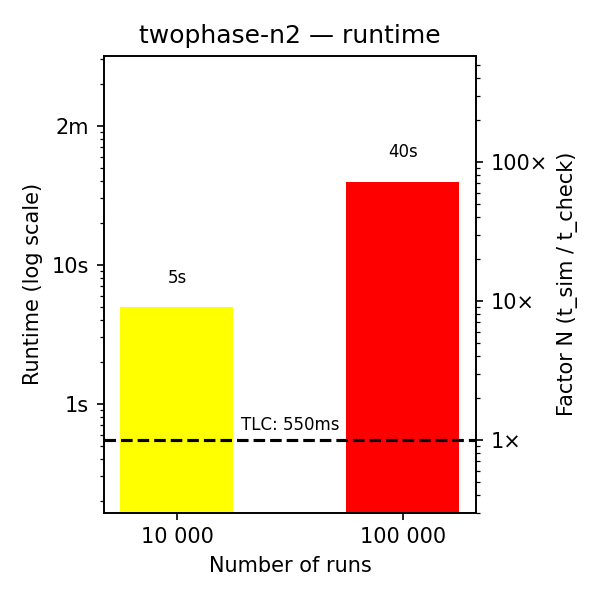

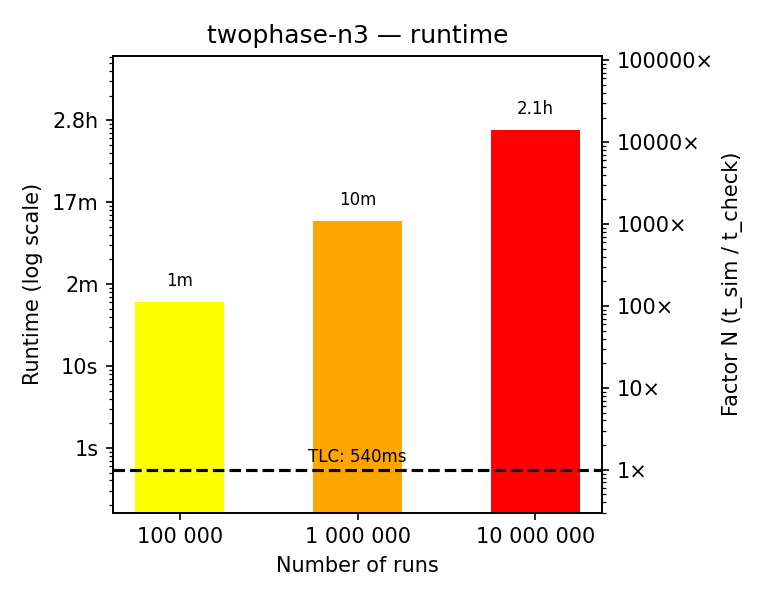

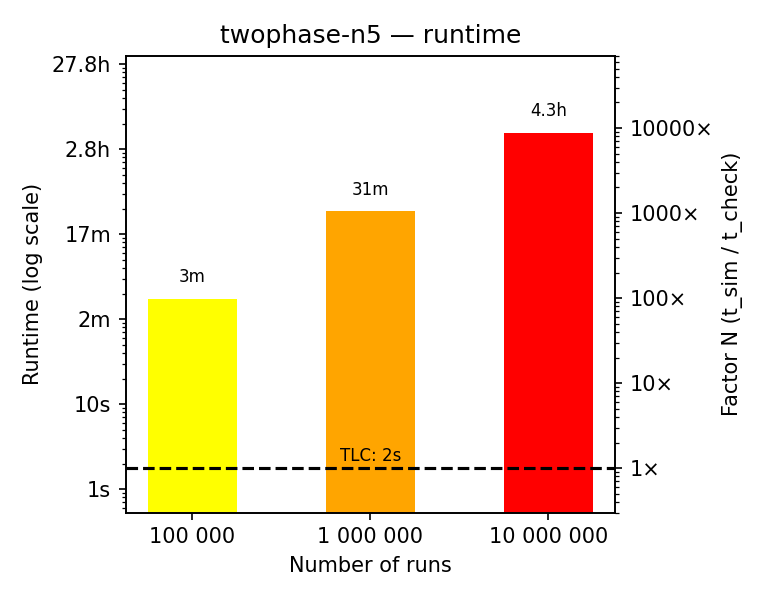

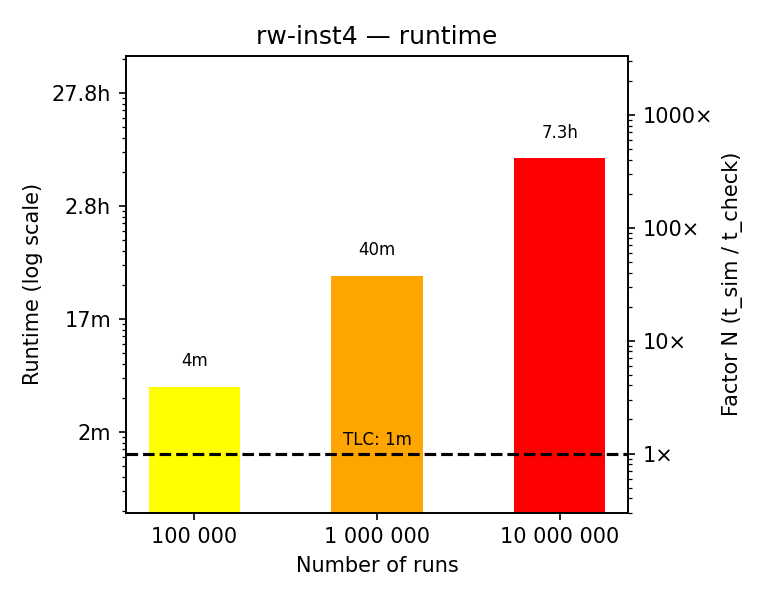

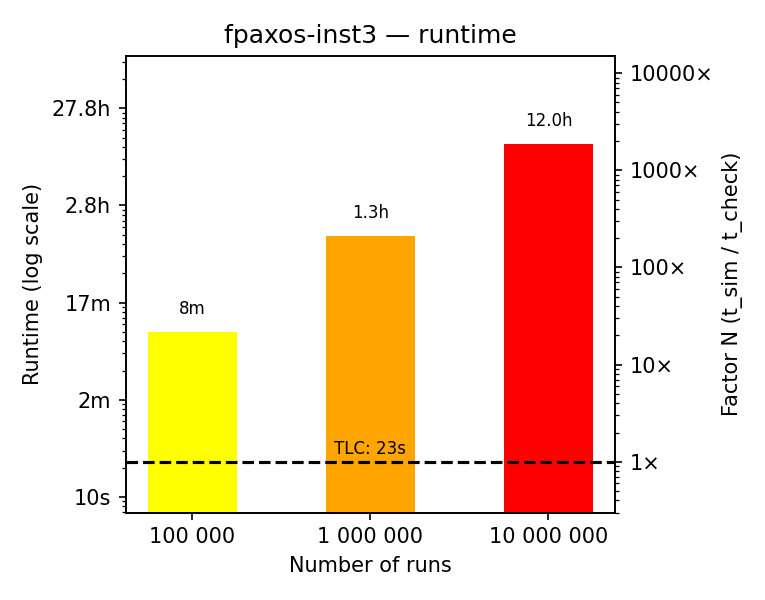

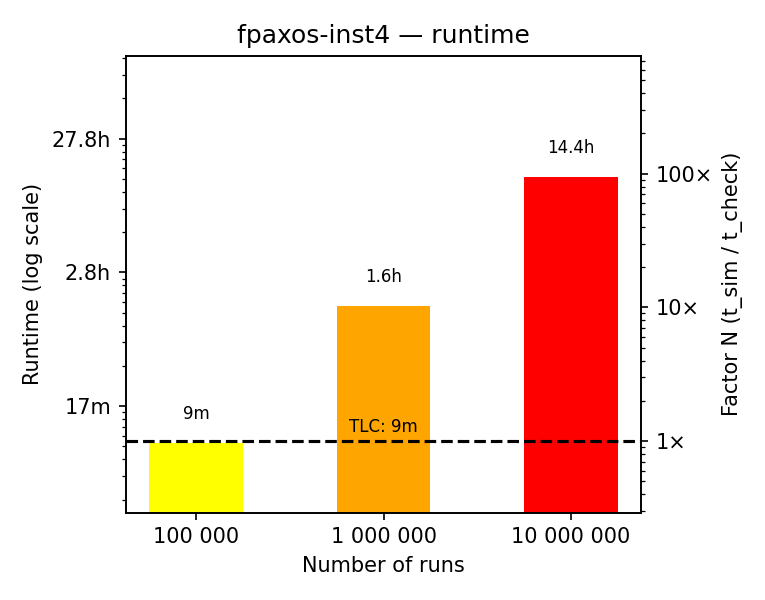

All of the above benchmarks are quite small by the model checking standards. They have tens of thousands of states. It takes TLC only 1-3 seconds to explore the state space and check the invariants for each of these benchmarks.

Figure 2 shows the running times for the above simulation benchmarks. The dashed lines show the running times for the TLC model checker to explore the complete state space. Additionally, the right-hand y-axis shows the slowdown factor of the simulations compared to the model checker. For example, for the two-phase commit benchmark with 3 resource managers, the model checker takes about 2 seconds, while 10 million simulations take about 2 hours, which is a slowdown factor of about 10,000. As you can see, 10 million simulations take hours, where the model checker needs several seconds.

3. Slightly larger instances

What happens if we take the instances that are still small, but have 1-2 participants more? Figure 3 shows the results of running TLC simulations on these instances.

As you can see, with the meaningful default of 100,000 random walks, we achieve poor coverage on readers-writers and FPaxos, though the coverage on two-phase commit is nearly 99%. So this TLC simulations achieve much better coverage on two-phase commit than random walks, but they have comparable coverage on the readers-writers and FPaxos benchmarks! You can also switch between this blog post and random walks to see the difference in coverage between the two approaches.

To stress the message of the previous blog post, these instances are not that large by the model checking standards.

4. Running times for larger instances

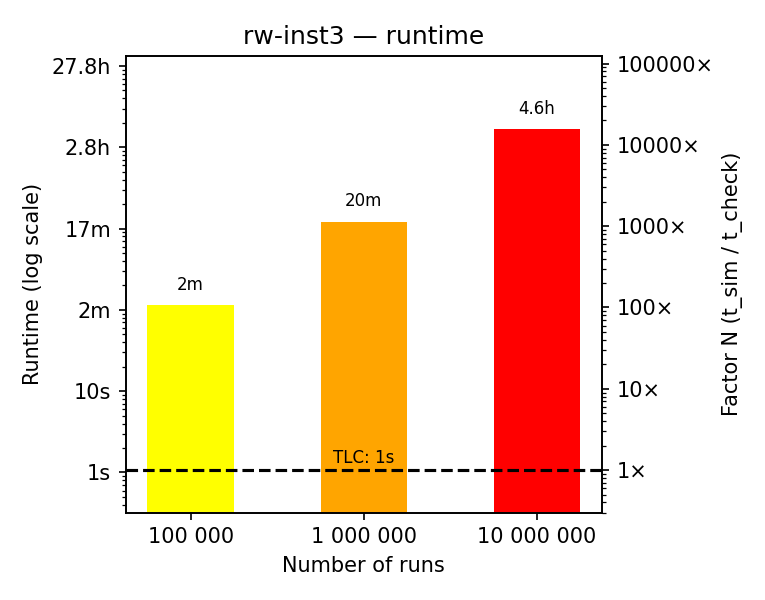

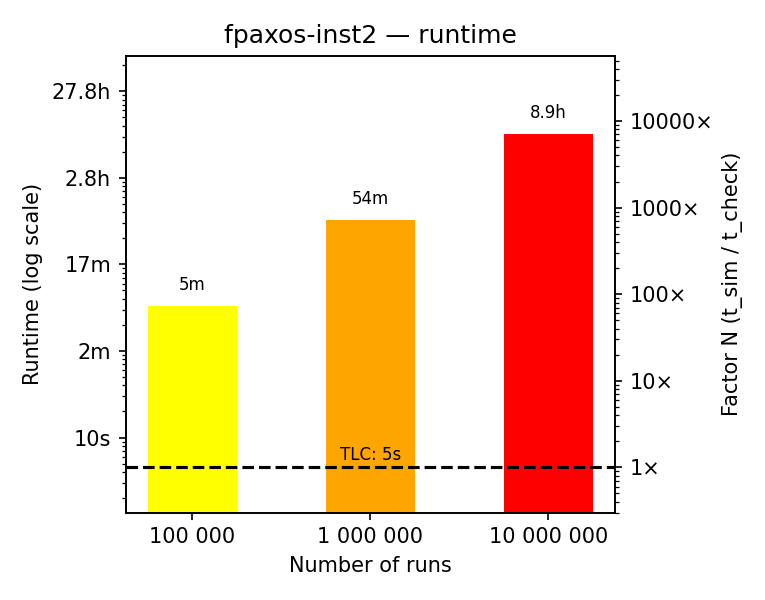

Again, it takes the model checker TLC up to 10 minutes to enumerate all the states and check the invariants for these instances, whereas we have been running the simulations for hours!.

5. Conclusions

I am not going to repeat the conclusions from the previous blog post. They are still valid. The new TLC simulation mode achieves better coverage than the random walks on two-phase commit, but it has comparable coverage on readers-writers and FPaxos. The running times of the TLC simulator with a single-worker are worse than the model checker and the random walks.

Want to talk?

If you want to pick up my brain for a few hours, drop me an email. After that, we can switch to a messenger of your choice (e.g., Signal, Telegram, or others) or have a call. For short consultations, I accept payments via Stripe. I also consider longer-term engagements (from weeks to months), which would require a contract. You can see my portfolio at konnov.phd. I am based in Austria (CET/CEST) and have experience working with clients from the US, UK, and EU.

The comments below are powered by GitHub discussions via Giscus. To leave a comment, either authorize Giscus, or leave a comment in the GitHub discussions directly.