All you need is a simulator? Nope

Author: Igor Konnov

Date: March 09, 2026

Punchline: Testing distributed protocols with random simulation and stateful property-based testing (PBT) is not enough! Yes, running a simulator for days is better than doing manual testing or just running unit tests. But you will miss states, which may expose bugs. Even on very small systems. I have been saying exactly this to many software engineers. Many times. However, whiteboard arguments do not help. As humans, we have a great deal of trust in probabilities, and our intuitive understanding of randomness is often wrong. Hence, I am giving you concrete figures and plots in this blog post. I must admit that my own intuition was also wrong: I expected fewer random walks to be needed to achieve good coverage. For a quick glance, see Quick summary.

Achieving complete coverage with random walks is hard. This is especially important to know, if you are using them to produce test cases for your implementation. It is also crucial to know, in case you generate an implementation of a distributed protocol with AI tools and hope for random walks/PBT to work as an ultimate guardrail.

Don’t get me wrong. I like PBT and simulators (having written the Quint simulator). I believe that these tools are must-have tools for testing. See my recent blog post on Property-based testing, adversarial developers, and LLMs. However, they are not the only tools that we need to make sure that our systems work as expected. This is especially true now, when we do not have time to properly design and review the AI-generated code.

Why now? It has always been difficult to compare search procedures that were developed by different branches of computer science. Everyone wanted to promote their technique as the ultimate winner. Want to compare property-based testing and model checking? Bad luck. Different tools require different inputs. Some are libraries for programming languages (like QuickCheck), some are tools for specification languages (like TLC and Apalache). Now it is much faster to design frameworks, to experiment with multiple search procedures. It is also easier to do reproducible experiments with LLMs. Good times, if you know how to conduct experimental research.

In contrast to the previous blog posts, I do not provide the artifacts for download. AI slop forks are real. It still takes me several days to design and conduct the experiments on a beefy machine, as well as to find the right format to interpret and plot the data. Even with the help of the frontier models, though they are of great help. It only takes 10-15 minutes to repackage the benchmarks and results with an AI tool, having the experimental data. Hence, I am sharing my lab book with the customers and researchers, upon request.

Need this kind of testing for your protocol?

I help teams turn distributed-system behavior into executable specs, model-checking campaigns, and CI-ready conformance harnesses.

Discuss your system →1. Quick summary for the impatient readers

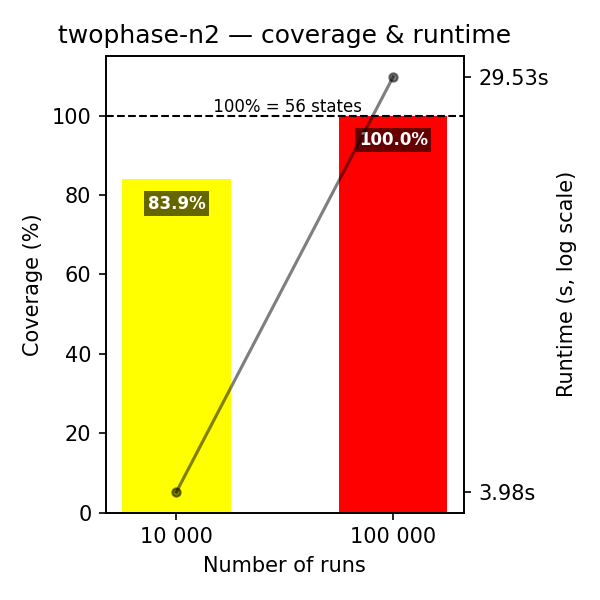

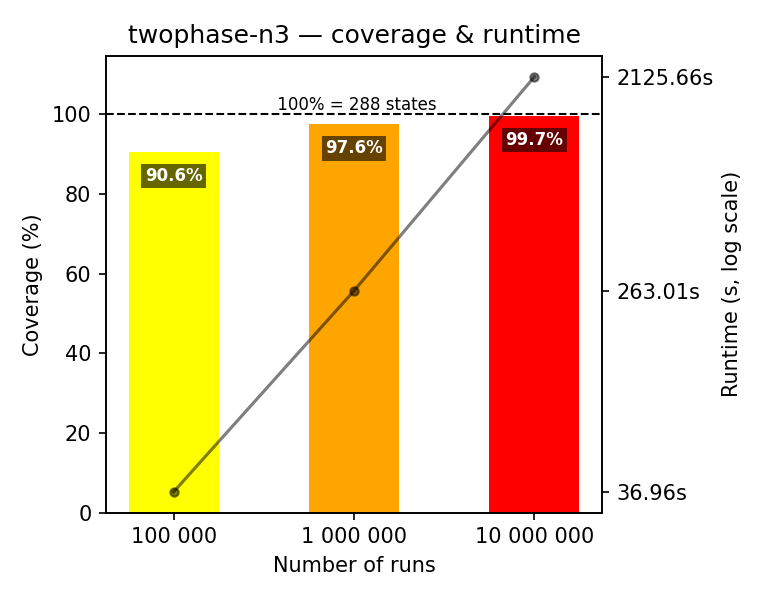

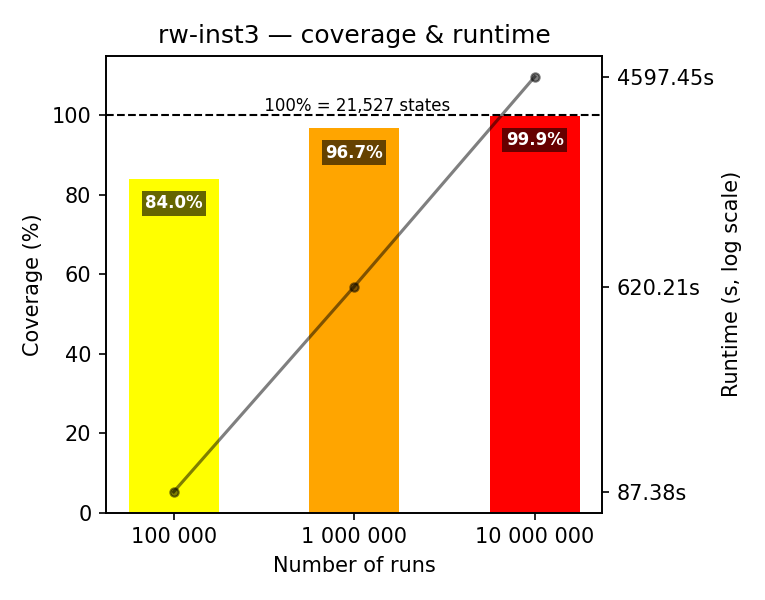

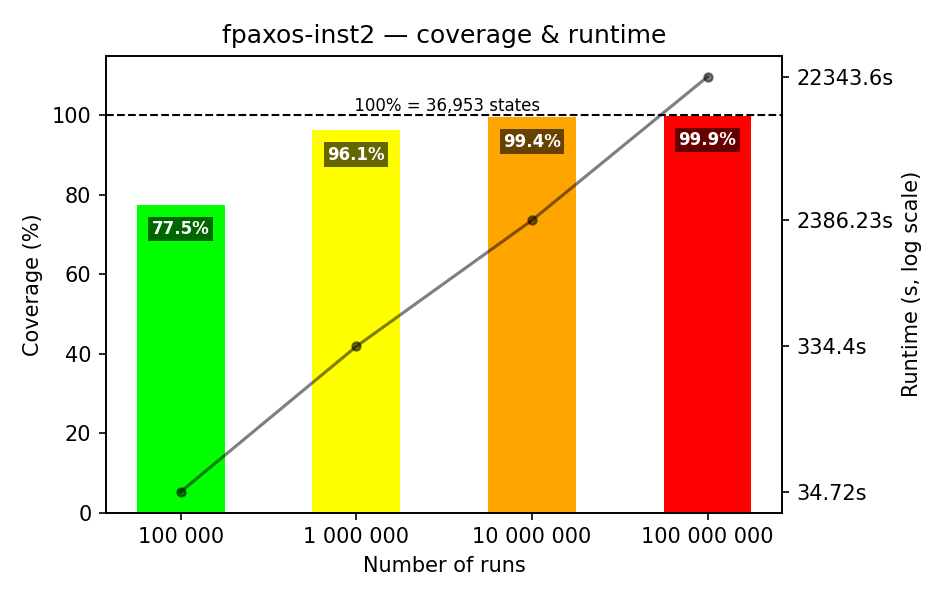

Look at two groups of figures below. They summarize the results of running random walks on specifications of three prominent distributed protocols: two-phase commit, readers-writers, and FPaxos (see Benchmarks). The figures show the coverage achieved by random walks, with 100% being the numbers of distinct states (reported by the model checker TLC). In addition, we plot the running times of the random walks, with the values plotted against the right y-axis. All running times are on a AMD Ryzen 9 5950X processor (16 physical, 32 logical cores), 128 GB memory.

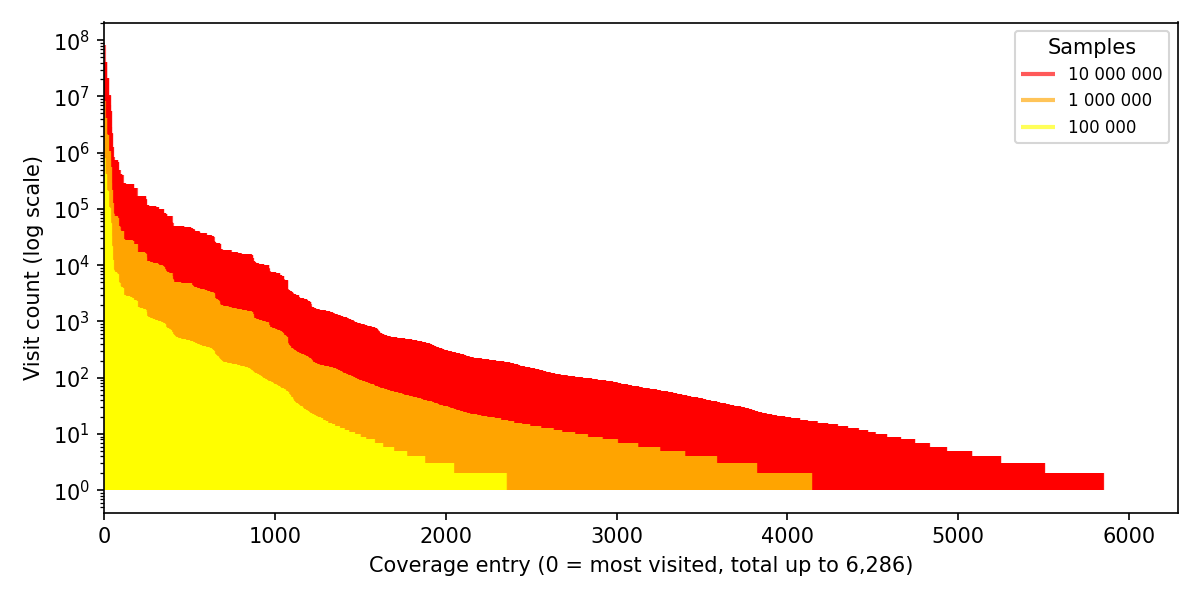

1.1 Coverage for minimal instances

In this set of experiments, we do random walks for the minimal instances of the benchmarks. We start with the meaningful default of 100,000 random walks, with at most 100 steps per walk. As you can see from Figure 1, only in the case of two-phase commit and two resource managers, we achieve complete state coverage. This is not surprising, since this instance has only 56 states. It’s tiny! For two-phase commit with three resource managers and readers-writers with three actors, we achieve 85-90% coverage. This is also in the reasonable range. On FPaxos with two acceptors, we achieve the 77.5% coverage with 100k random walks. This is a bit worrying, since the state space is about 37k states.

The good news is that we can push all of the above benchmarks to achieve over 99% coverage. As you can see in the figures, it takes 10 million random walks to achieve 99% coverage. In addition to that, these runs require 1-2 hours.

All of the above benchmarks are quite small by the model checking standards. They have tens of thousands of states. It takes TLC only 1-3 seconds to explore the state space and check the invariants for each of these benchmarks.

1.2 Slightly larger instances

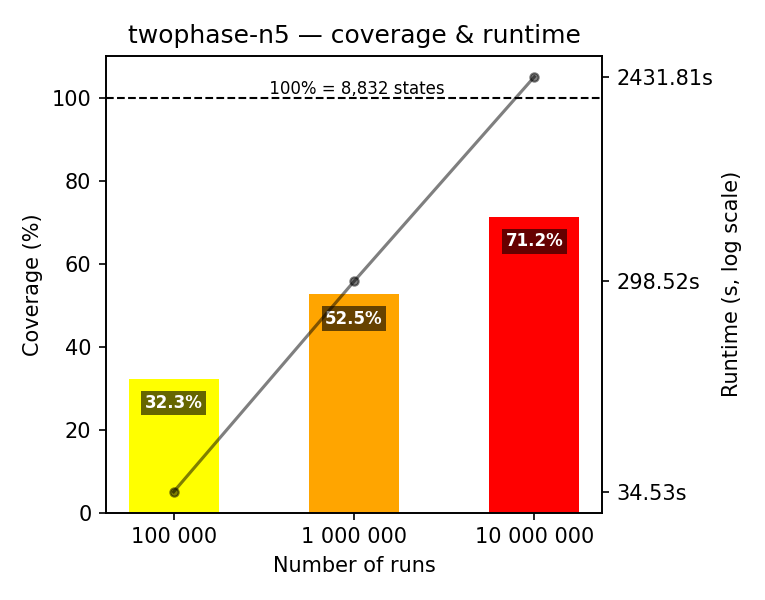

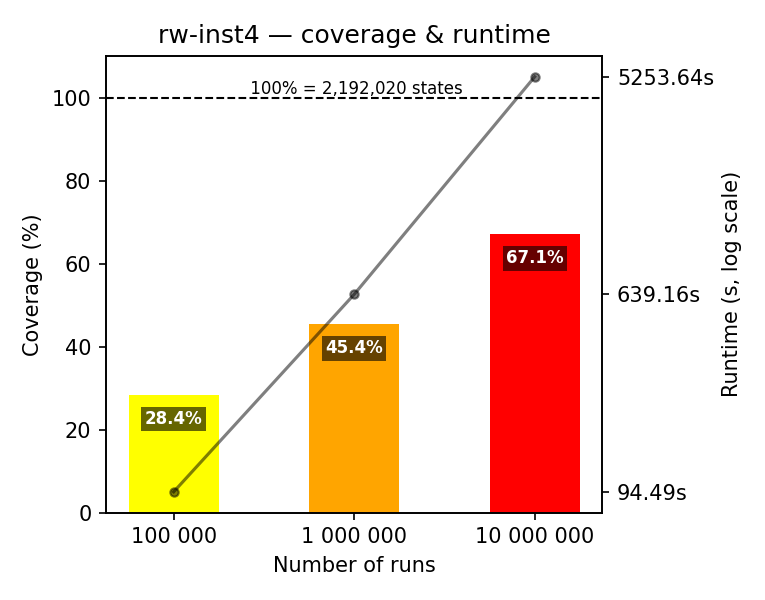

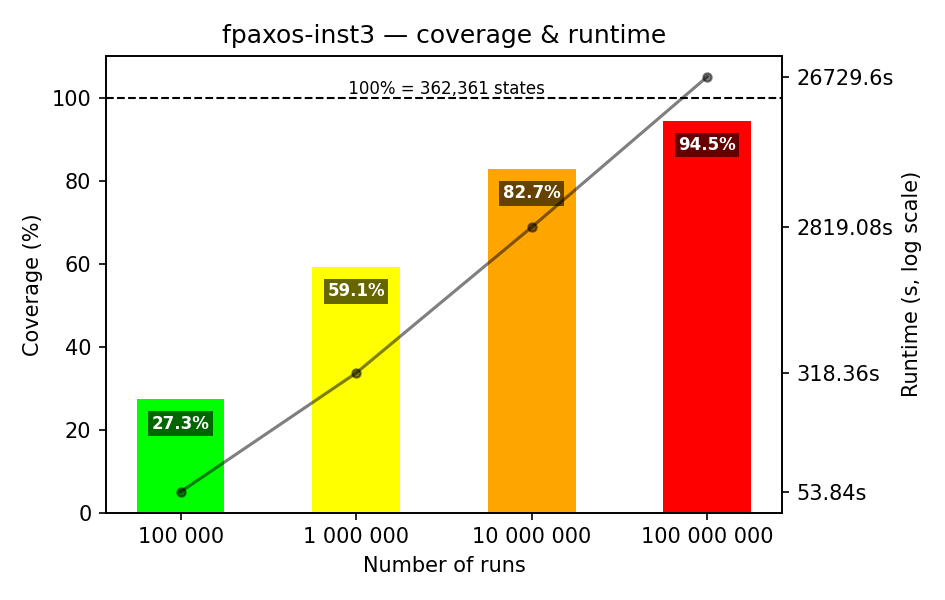

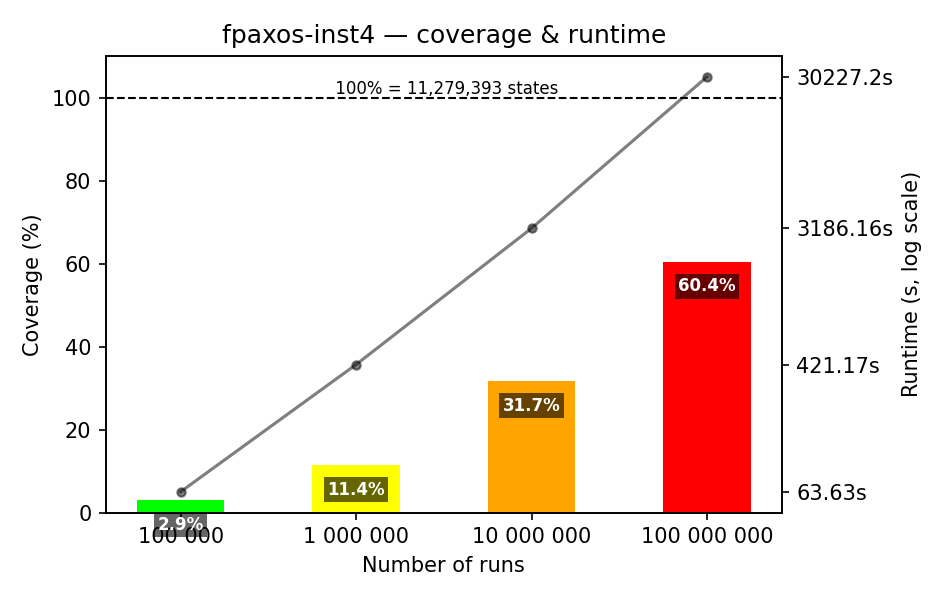

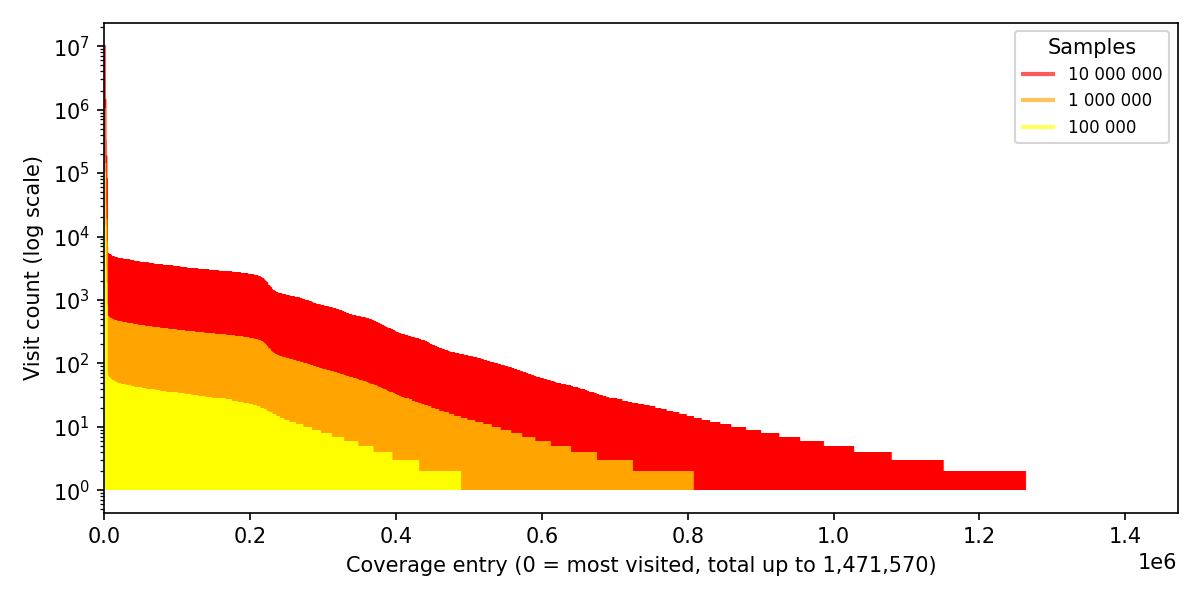

What happens if we take the instances that are still small, but have 1-2 participants more? Figure 2 shows the results of doing random walks on these instances.

As you can see, with the meaningful default of 100,000 random walks, we achieve extremely poor coverage, about 25-30% on the benchmarks up to 2 million states. On FPaxos with 4 acceptors, we achieve only 3% coverage after 100,000 random walks. Really bad!

To see how far we could push the coverage, we did the experiments with 10-100 million random walks. It is clear that in 1-2 hours of simulation we get to 60-80% coverage. It is good, but not great. When we push FPaxos with 3 acceptors to 100 million random walks, we get to 94.5% coverage. Nice, though it took us 7.5 hours to get there. However, on FPaxos with 4 acceptors, we get a poor coverage of 60.4% even with 100 million random walks, which took us 8.5 hours to run. This benchmark has about 11 million states. So it is reasonably large, but, again, not that large by the model checking standards.

Again, it takes the model checker TLC up to 10 minutes to enumerate all the states and check the invariants for these instances, whereas we have been running the simulations for hours! This is especially striking, given that we are running optimized simulators in Rust.

2. The benchmarks

As benchmarks, we use three specifications of distributed protocols. These are prominent examples from the repository of TLA+ Examples:

-

Two-phase commit. This is the famous two-phase commit. The specification is explained in Consensus on Transaction Commit by Jim Gray and Leslie Lamport. You can check the TLA+ specification in TwoPhase.tla.

-

Readers-writers. This is a solution to the Readers-Writers Problem. The TLA+ specification by Stephan Merz can be found in ReadersWriters.tla.

-

FPaxos. This is Flexible Paxos by Heidi Howard, Dahlia Malkhi, and Alexander Spiegelman. The TLA+ specification can be found in FPaxos.tla.

All of the above specifications are parameterized in the number of participating processes. We consider several instances of each benchmark. To give you an idea of their state space size (the number of reachable states), we compute the figures with TLC. The reachable states are called distinct states in TLC, whereas produced states are the number of states that TLC generates during the search. Another important metric is the diameter of the state space, which is the length of the longest shortest path between any two reachable states (read it again!).

As you can see from Table 1, these transition systems are not tiny, but they are actually small by the model checking standards. Surprisingly, they are sophisticated enough to challenge random walks! Distributed protocols are hard.

| Benchmark | Instance | Distinct states | Produced states | Diameter | TLC times |

|---|---|---|---|---|---|

| Two-phase commit | 2 resource managers | 56 | 154 | 8 | 1 sec |

| 3 resource managers | 288 | 1,146 | 11 | 2 sec | |

| 5 resource managers | 8,832 | 58,146 | 17 | 2 sec | |

| Readers-writers | 2 readers/writers | 390 | 935 | 9 | 2 sec |

| 3 readers/writers | 21,527 | 59,674 | 13 | 2 sec | |

| 4 readers/writers | 2,192,020 | 7,069,237 | 17 | 1 min | |

| FPaxos | 2 acceptors | 36,953 | 245,288 | 19 | 4 sec |

| 3 acceptors | 362,361 | 2,697,682 | 25 | 21 sec | |

| 4 acceptors | 11,279,393 | 96,056,172 | 31 | 9 min |

In the experiments, I am using a custom framework to represent the above specifications-as-code that makes it easy to experiment with different search procedures. To make sure that these specifications faithfully represent the original TLA+ specifications, I do the following:

-

do a code review (obviously),

-

automatically translate the specifications to TLA+ and check them with TLC,

-

run a custom-tailored model checker to compute the number of distinct states and check the invariants.

3. What are random walks and state enumeration?

I have mentioned random walks and state enumeration multiple times so far.

Let’s clarify what these terms mean. The concept of a random walk is intuitively

simple, though the details matter. Instead of looking at a large specification,

let’s look at a simple example of a system that models adding and removing

workers from a pool. This example is inspired by the example in Parameterize

Your Actions by Hillel Wayne. We add the variable

count to have a meaningful invariant. The specification is shown below. Even

if you do not know TLA+, it should be easy to understand. If you

still have trouble understanding it, just ask an LLM, they are good at

explaining TLA+ specifications.

EXTENDS Integers, FiniteSets

CONSTANTS

(* The set of workers to choose from. *)

(* @type: Set(Int); *)

Worker

VARIABLES

(* The set of active workers. *)

(* @type: Set(Int); *)

active,

(* The number of active workers. *)

(* @type: Int; *)

count

(* Add a worker w to the set of active workers, if it is not already active. *)

(* @type: (Int) => Bool; *)

Add(w) ≜ w ∉ active ∧ active' = active ∪ {w} ∧ count' = count + 1

(* Remove a worker w from the set of active workers, if it is active. *)

(* @type: (Int) => Bool; *)

Remove(w) ≜ w ∈ active ∧ active' = active \ {w} ∧ count' = count - 1

(* Initialize the system with no active workers and a count of zero. *)

Init ≜ active = {} ∧ count = 0

(* In a next state, either add a worker or remove a worker. *)

Next ≜ ∃ w ∈ Worker:

Add(w) ∨ Remove(w)

(* An invariant: `count` matches the cardinality of the active set. *)

Inv ≜ (count = Cardinality(active))

If we fix the set of workers to be Worker = {1, 2}, we get a nice labelled

transition system (LTS) of 4 states. The graphical representation of this LTS is

shown below.

TLA+ does not have any built-in notion of randomness or

probabilities. It is what is usually called a qualitative specification.

When evaluating Next in a state, we can only evaluate whether a specific

transition is possible under a specific choice of w and the action scheduling

decision (whether to execute Add(w) or Remove(w)). This is the standard

semantics under the definition of behaviors. We can enumerate all reachable

states for the above system by breadth-first search or depth-first search. This

is what the model checker TLC does (it uses breadth-first search). This is what

I will call state enumeration in this blog post.

We could also interpret the choice of w and the action scheduling decision as

a random choice. Since the above specification is small, we can visualize it as

a Markov decision process

(MDP). The states are

the same as in the LTS, but we also attach probabilities to the transitions.

Notice that we assign probabilities for choosing the value of w and for

choosing the action to execute: Add(w) or Remove(w). For example, in the

initial state, we choose w=1 with probability 0.5, then the action Add(1)

with probability 0.5, which gives us a transition to the state where active =

{1} and count = 1 (with probability 0.25). However, if we choose w=1 and

the action Remove(1), we have to backtrack to the initial state, since the

precondition of Remove(1) is not satisfied.

A random walk is a path through the MDP. It is a sequence of states that we get by making random choices at each step. In the above figure, you can see one walk in blue and one walk in red. To avoid too many backward edges, we have a retry budget, typically, 3-10 retries per step. We take this simple approach in our custom framework. It is similar to what the randomized simulator in Quint is doing, though the Quint simulator is trying a bit more locally before backtracking. Probabilities are basically used to produce various random walks. There is no inherent statistical meaning to these probabilities in random walks. This is very much how stateful property-based testing works, too, though PBT frameworks usually use biased coins, instead of uniform ones.

TLC also supports random simulation, but it assigns probabilities differently. Given a state, TLC first computes all successors of the state and then chooses one of the successors uniformly at random. This would give us a different MDP that filters out disabled transitions. Both approaches have their merits and drawbacks. The approach of TLC requires us to enumerate successors, unless we use reservoir sampling. It would actually work better on the examples in this blog post, since they have many disabled transitions. However, in systems that inject faults, this approach has an issue, as the faulty transitions often dominate the search.

4. Which states are missing?

Since we can measure state coverage now, the next question is: What are these states that we are missing? Maybe these states are not important at all. To check that, I ran the random walks for the two-phase commit benchmark with 2 resource managers for 10,000 instead of 100,000 runs. Conveniently, exactly one state was missing from the coverage. As our specifications are code, I just asked Claude to instrument the search to experimentally evaluate the visit frequencies per run for each reachable state. Figure 6 is quite detailed. Click on it to see the full-size version.

As we can see, the missing state (with the frequency of 0) is the state where the transasction manager aborts the transaction, one resource manager also aborts the transaction, and the other resource manager is in the “prepared” state. This is an interesting state in this protocol, as the other resource manager still has the potential to commit the transaction, though it should not do that.

Bottom line: We may miss important states with random walks.

5. More coverage plots

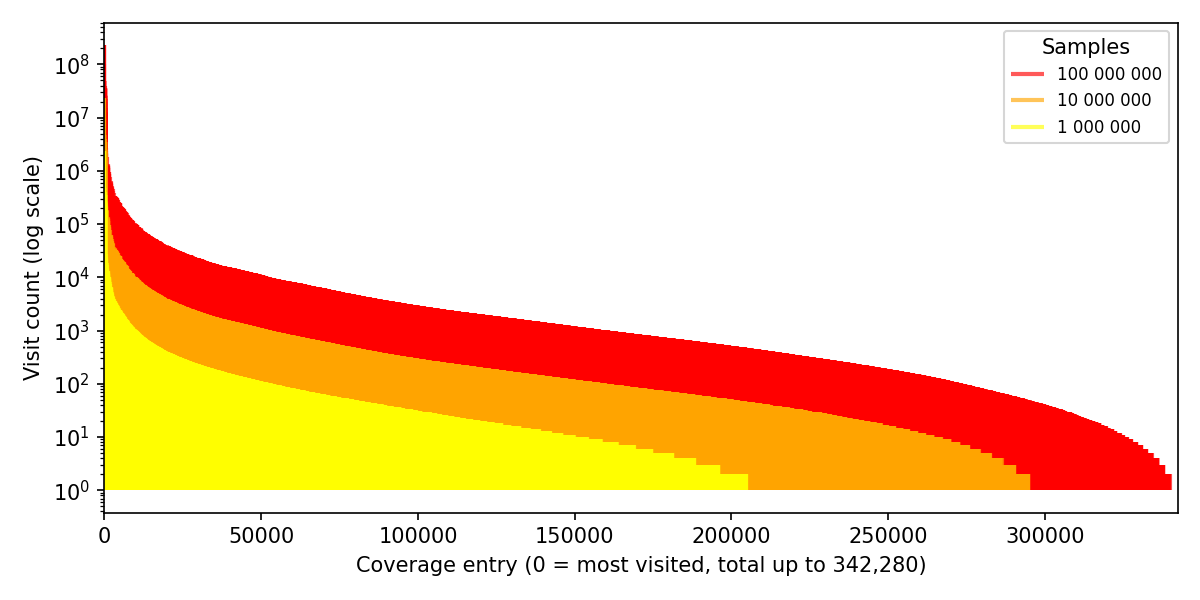

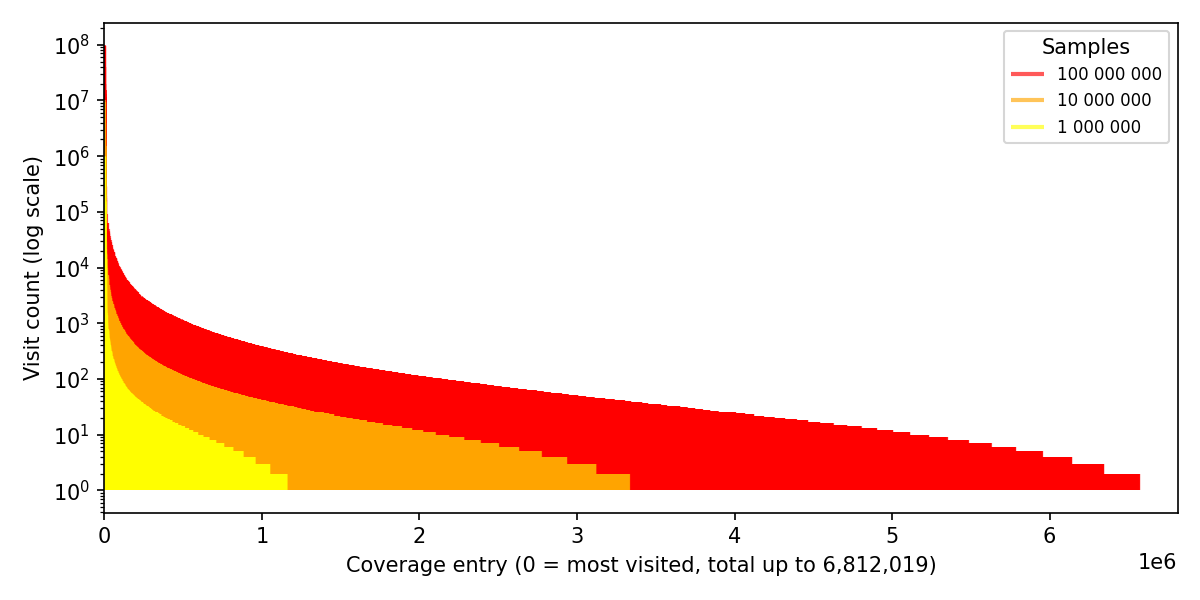

Figure 7 shows the coverage evolution for the large instances of the benchmarks. With this, we can see how increasing the number of random walks helps to increase the coverage. It also demonstrates the growing volume of covered and missing states.

I wanted to share these flame plots with you. I find them cool.

6. Conclusions

Random walks are not sufficient to achieve complete coverage except for very small state spaces. Moreover, random walks take significantly longer than the model checker. This is especially striking, given that we are running optimized simulators in Rust. Another issue with state coverage by random walks is that you would not even know that you achieved complete coverage. You can measure the speed of discovering new states, but understanding that the simulator has converged basically requires a model checker.

Interestingly, random walks behaved badly on FPaxos with four acceptors. This is a relatively benign benchmark, not having a state explosion like specifications of Byzantine consensus protocols (BFT). In BFT, the minimal configurations contain 4-6 replicas, depending on the protocol. Hence, we should expect a significantly worse coverage by random walks on BFT.

Why do engineers keep running randomized experiments? Well, it is relatively easy to write a simulator. (It is not that easy to write one that actually works!) I have seen people playing with action distributions in the simulator, just to drive the search towards “interesting” states. Whenever I was asking, where the distributions were coming from, they could not explain this. Simulators are deceptive. You have to understand what you are doing, or, better, incorporate feedback. The most basic feedback is state coverage, though we can implement more sophisticated feedback mechanisms.

From our experiments it may look like state enumeration is all we need. I would argue that it is true as long as the set of reachable states fits into memory. We do not have to store the states directly in memory, practical model checkers store hashes of states. We can go as far as to store 2-3 bits per state, assuming that collisions are acceptable (still better than random walks!). Having a machine with 128 GB of memory, we can store roughly 50 billions of states. This is way more than the number of states in our benchmarks – dozens of billions vs. thousands and millions.

There are cases where randomness may find bugs, where state enumeration gets stuck:

-

Value domains are quite large. For example, if we choose values from the set of all 64-bit integers, it is not feasible to enumerate all successors even for a single state. A random walk can still do some progress without getting stuck. One can argue that choosing a value from the set $[0, 2^{64})$ uniformly at random is shooting in the dark, but sometimes it helps us to find bugs, especially if the large set has just a few large equivalence classes. Arguably, one should be able to apply data abstraction in this case. Also, this is usually the moment when you should consider using a model checker that supports symbolic representation of states, like Apalache.

-

Guided search. If we have an heuristic that guides the search towards interesting states, we can achieve better coverage with random walks faster. Maybe we use reinforcement learning to learn such a heuristic. Maybe we use an LLM to predict which actions are more likely to lead to interesting states. The main issue is that it is quite hard to find a direction for the search in the state space of distributed protocols.

Want to talk?

If you want to pick up my brain for a few hours, drop me an email. After that, we can switch to a messenger of your choice (e.g., Signal, Telegram, or others) or have a call. For short consultations, I accept payments via Stripe. I also consider longer-term engagements (from weeks to months), which would require a contract. You can see my portfolio at konnov.phd. I am based in Austria (CET/CEST) and have experience working with clients from the US, UK, and EU.

The comments below are powered by GitHub discussions via Giscus. To leave a comment, either authorize Giscus, or leave a comment in the GitHub discussions directly.